Tier 1: The experiments that change your economics

These experiments change what the customer pays, receives or how the offer is structured. They're often harder to implement and require quite a lot of testing, but they consistently produce the largest, fastest results.

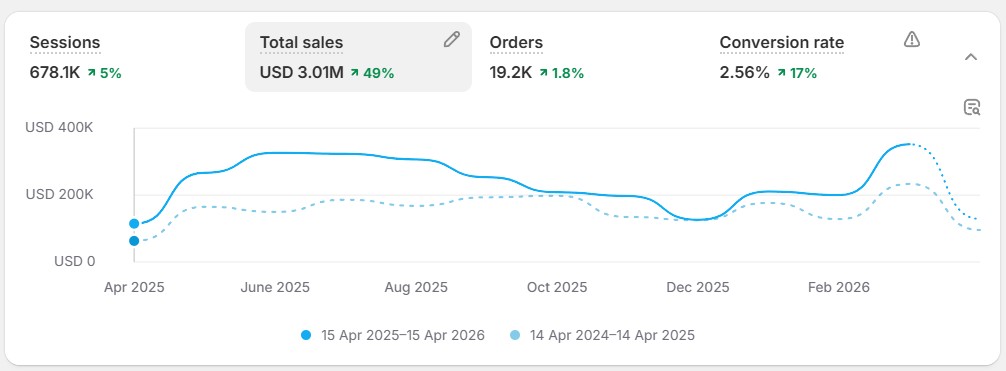

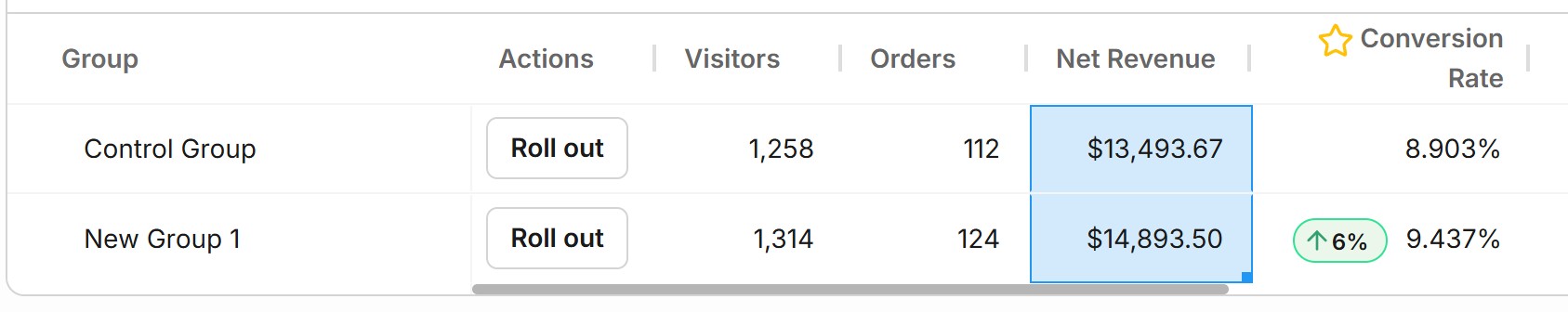

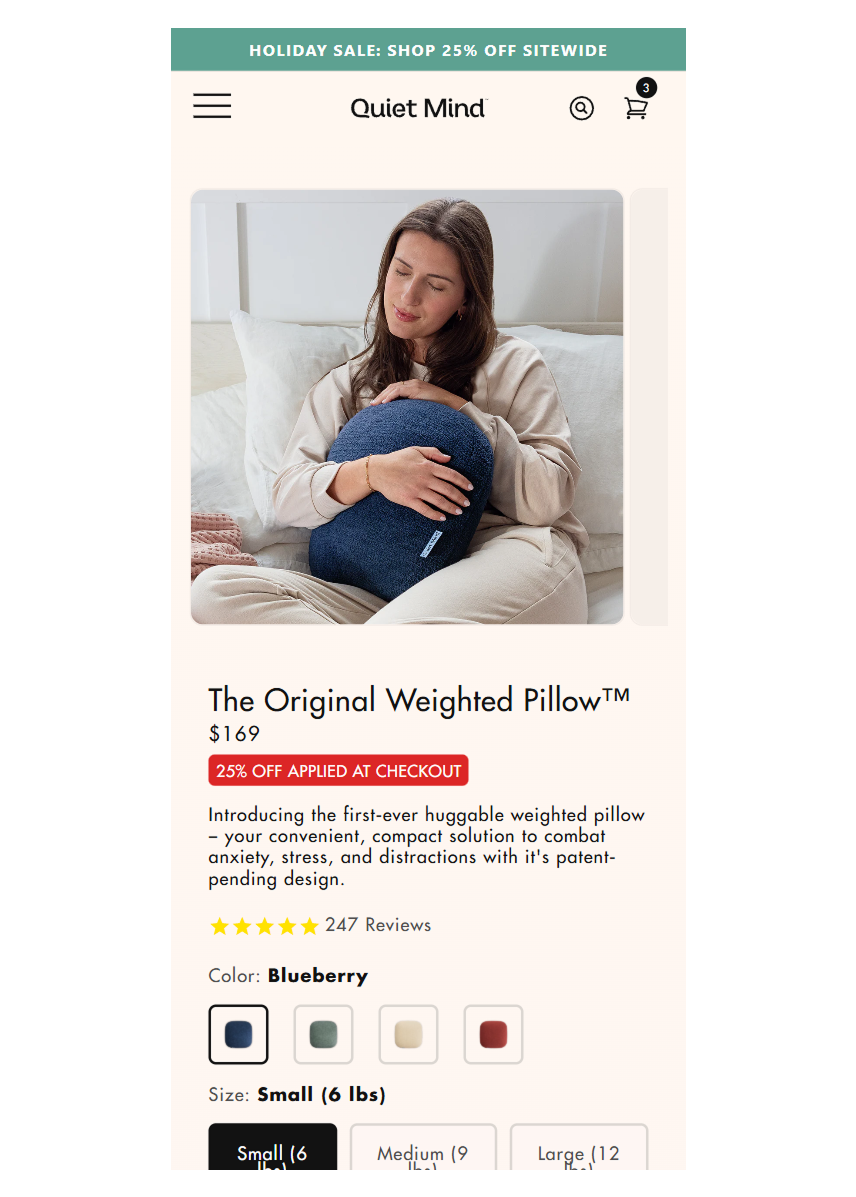

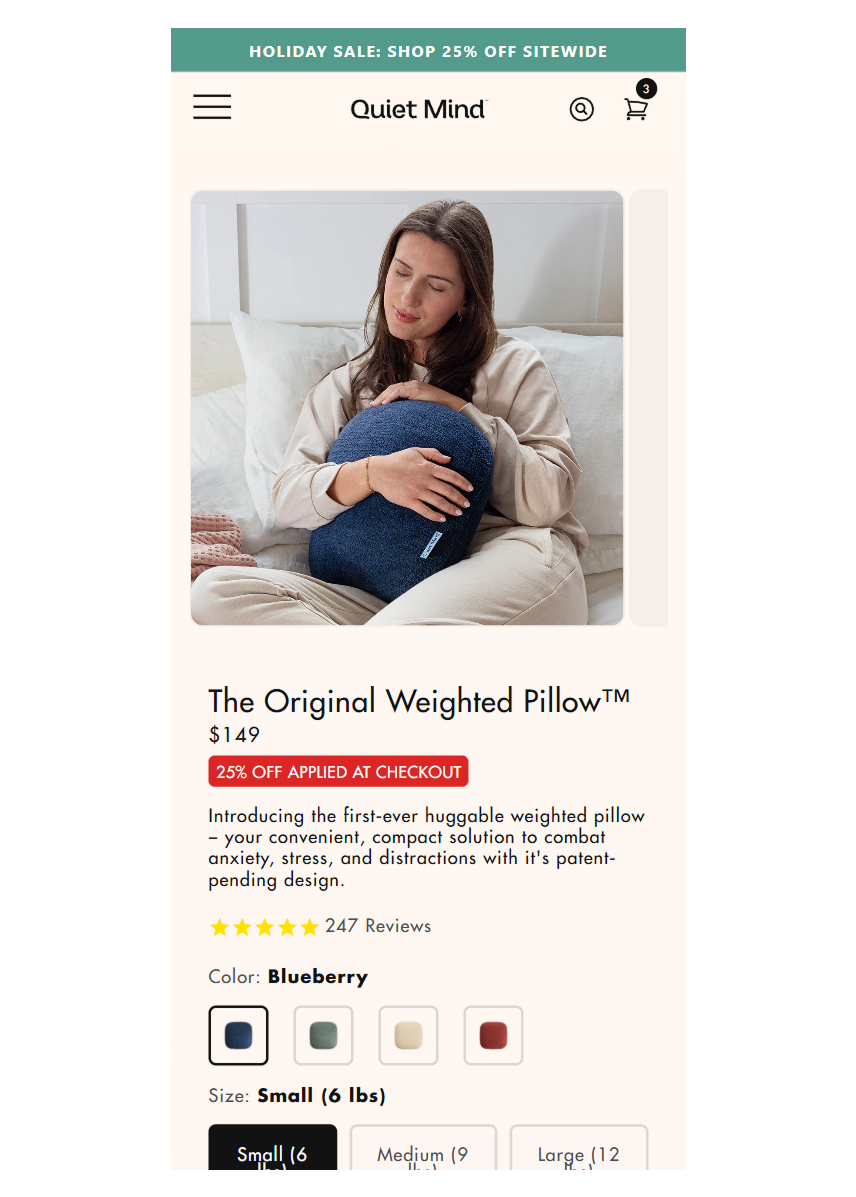

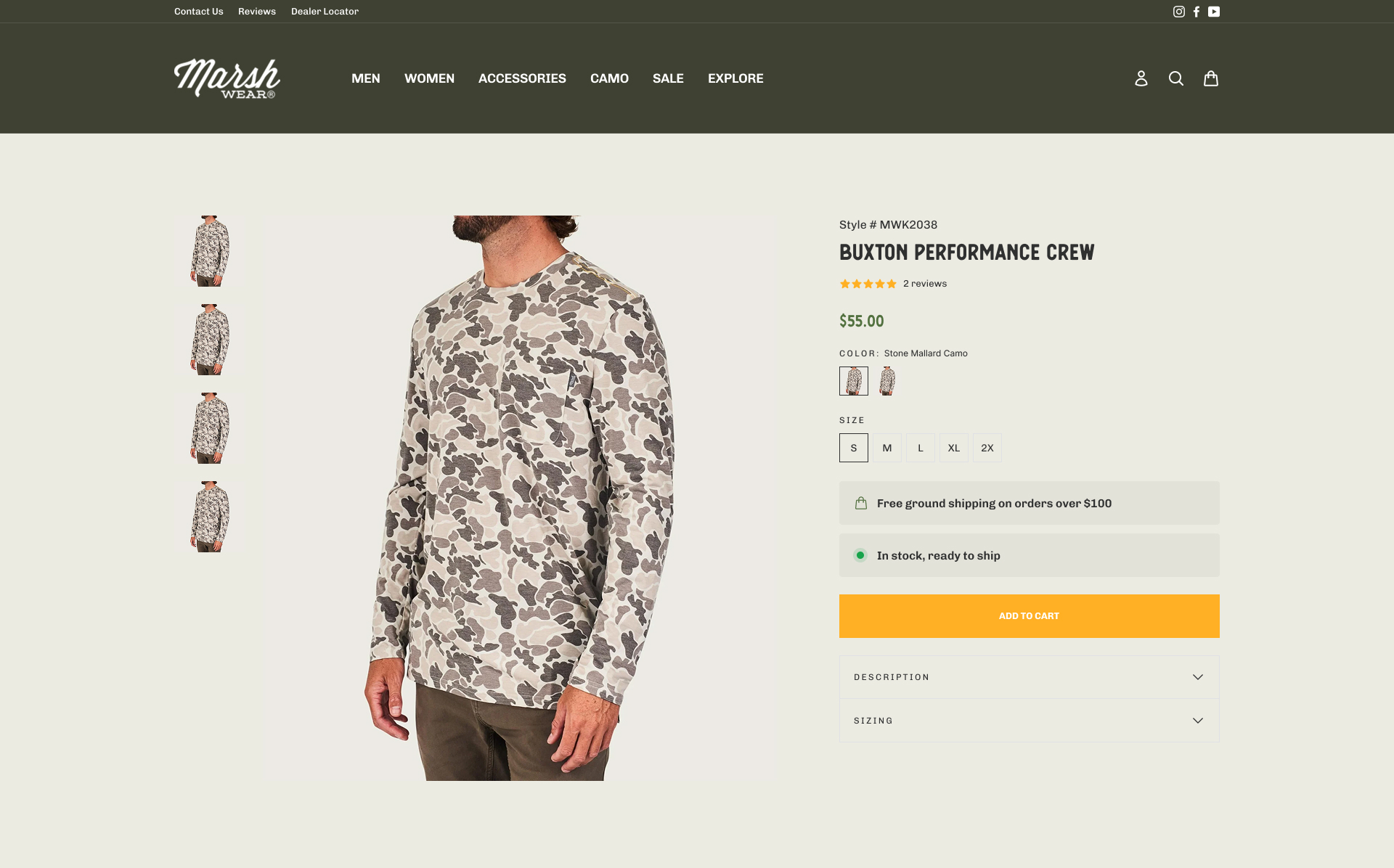

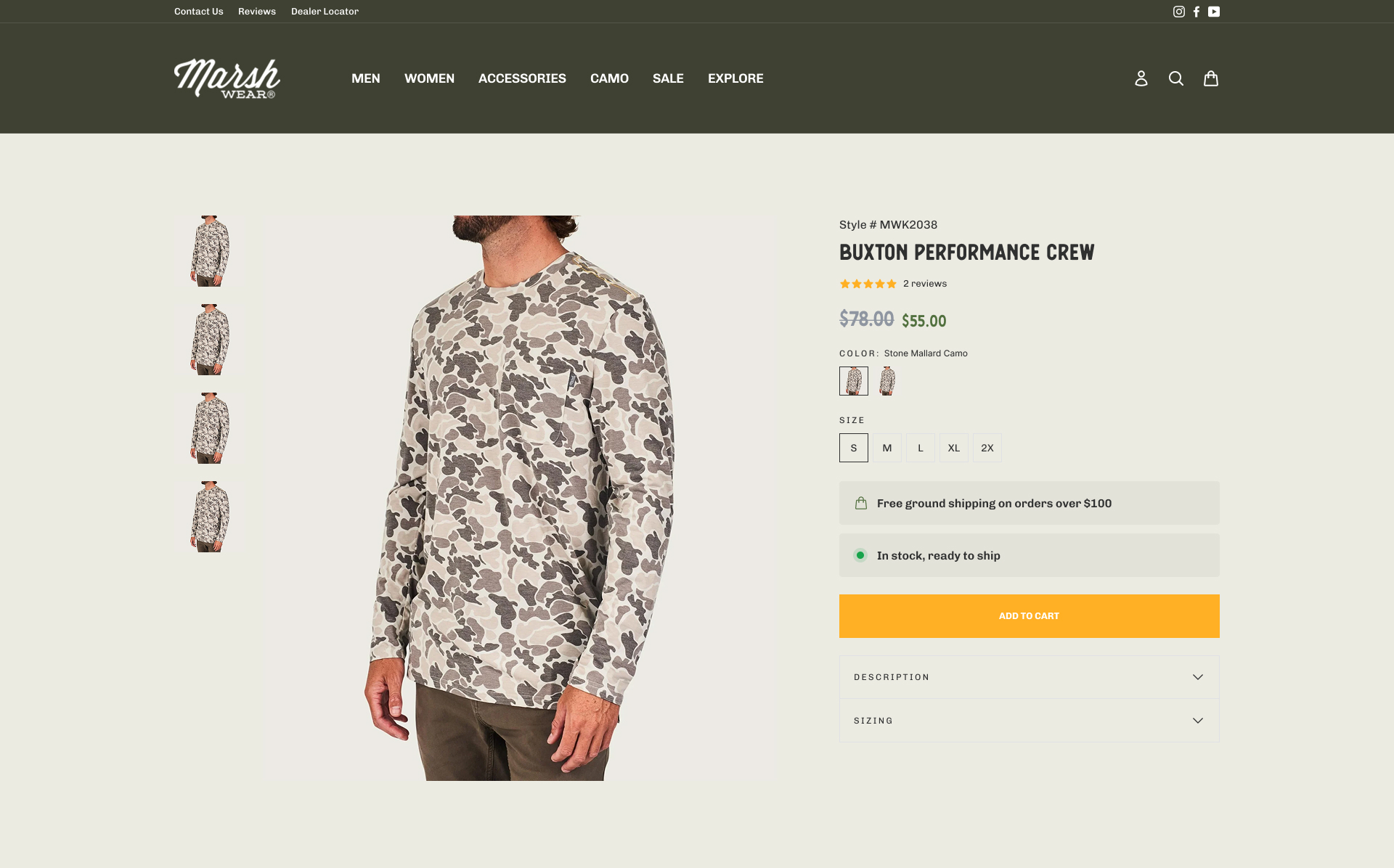

1. Price Increase on Hero SKUs

Tested a 15% price increase on three flagship weighted pillow SKUs. Conversion rate went up, not down. The original price was anchoring the product as "cheap," and the target audience associated higher price with higher quality.

For TooTimid: Your premium vibrators and toys could be underpriced relative to what your customers expect to pay for quality. A price test on your top 3-5 SKUs would tell us immediately whether you're leaving margin on the table.

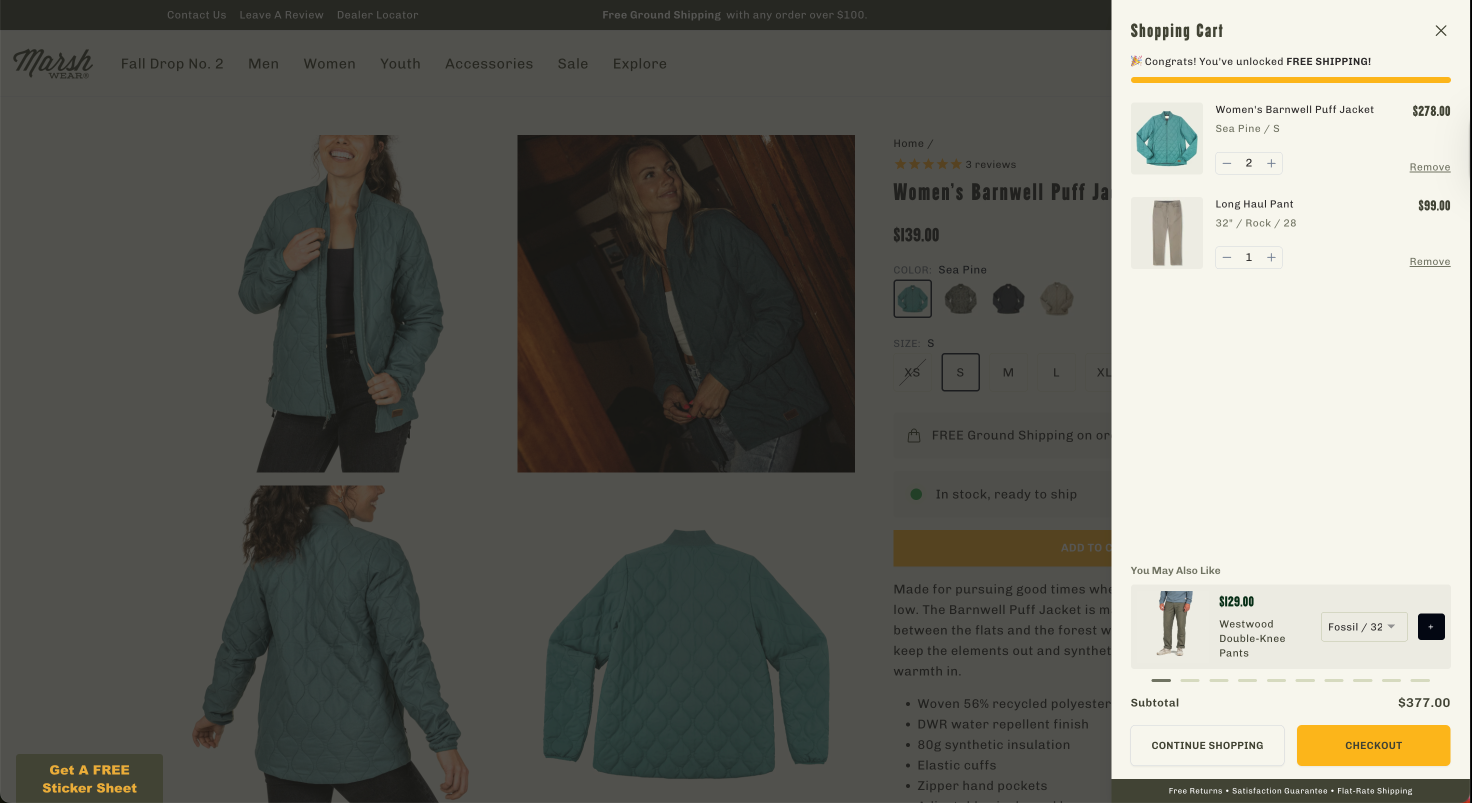

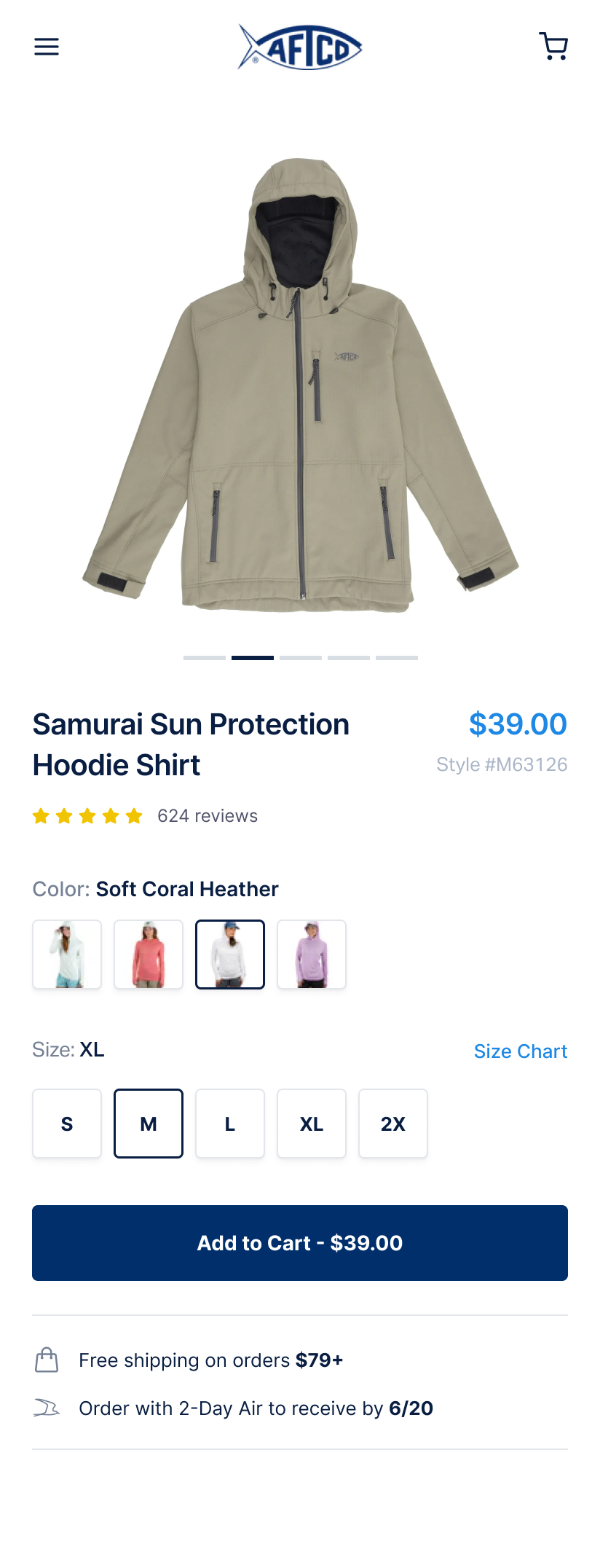

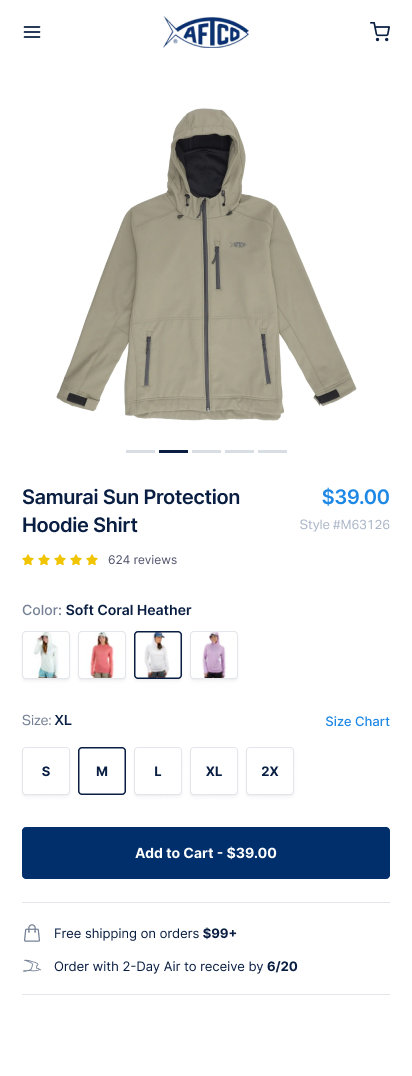

2. Free Shipping Threshold Optimization

Tested raising the free shipping threshold from $79 to $99. Pushed customers to add one more item to qualify. Average overshoot was 25-30% above the new threshold.

For TooTimid: Your current free shipping threshold is $59. Testing a higher threshold ($79 or $99) could meaningfully lift AOV. Your catalogue is deep enough that customers can easily add complementary items to reach a higher bar.

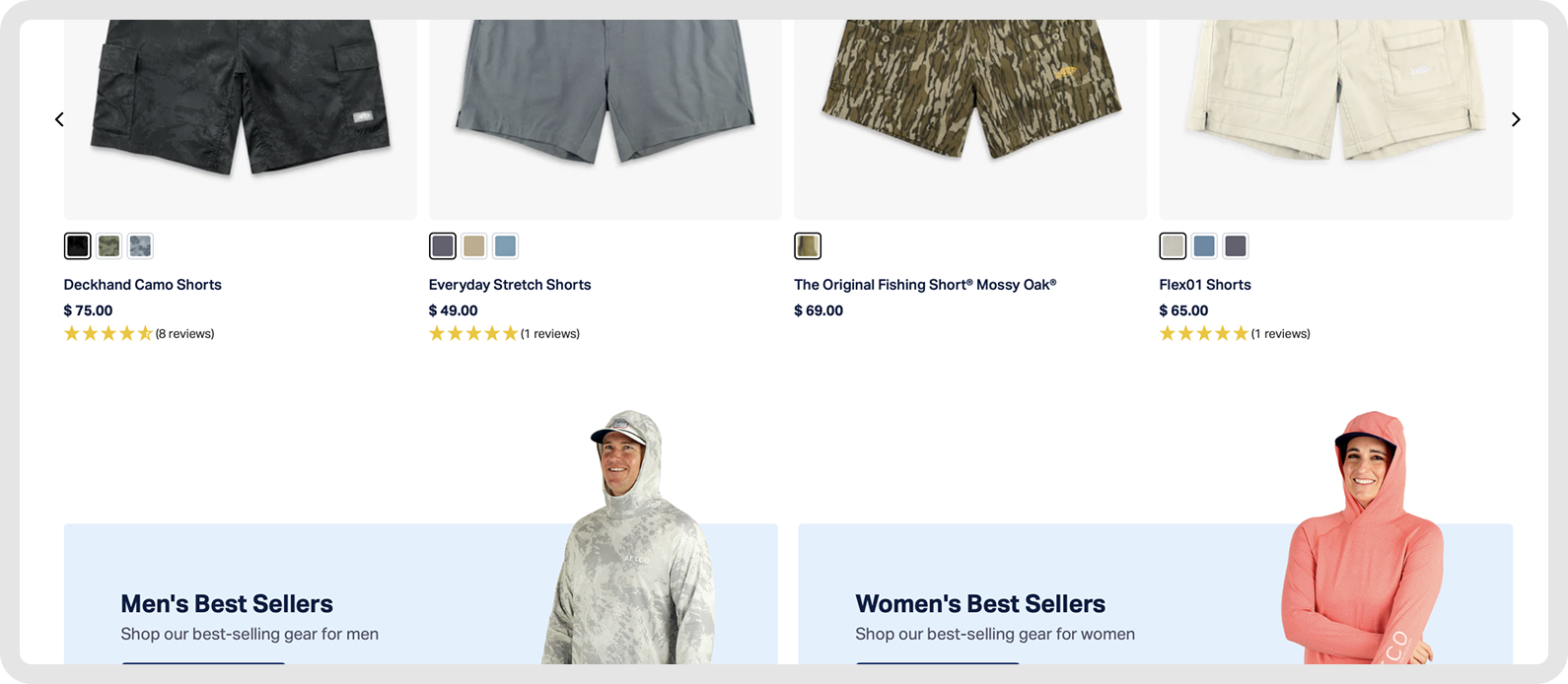

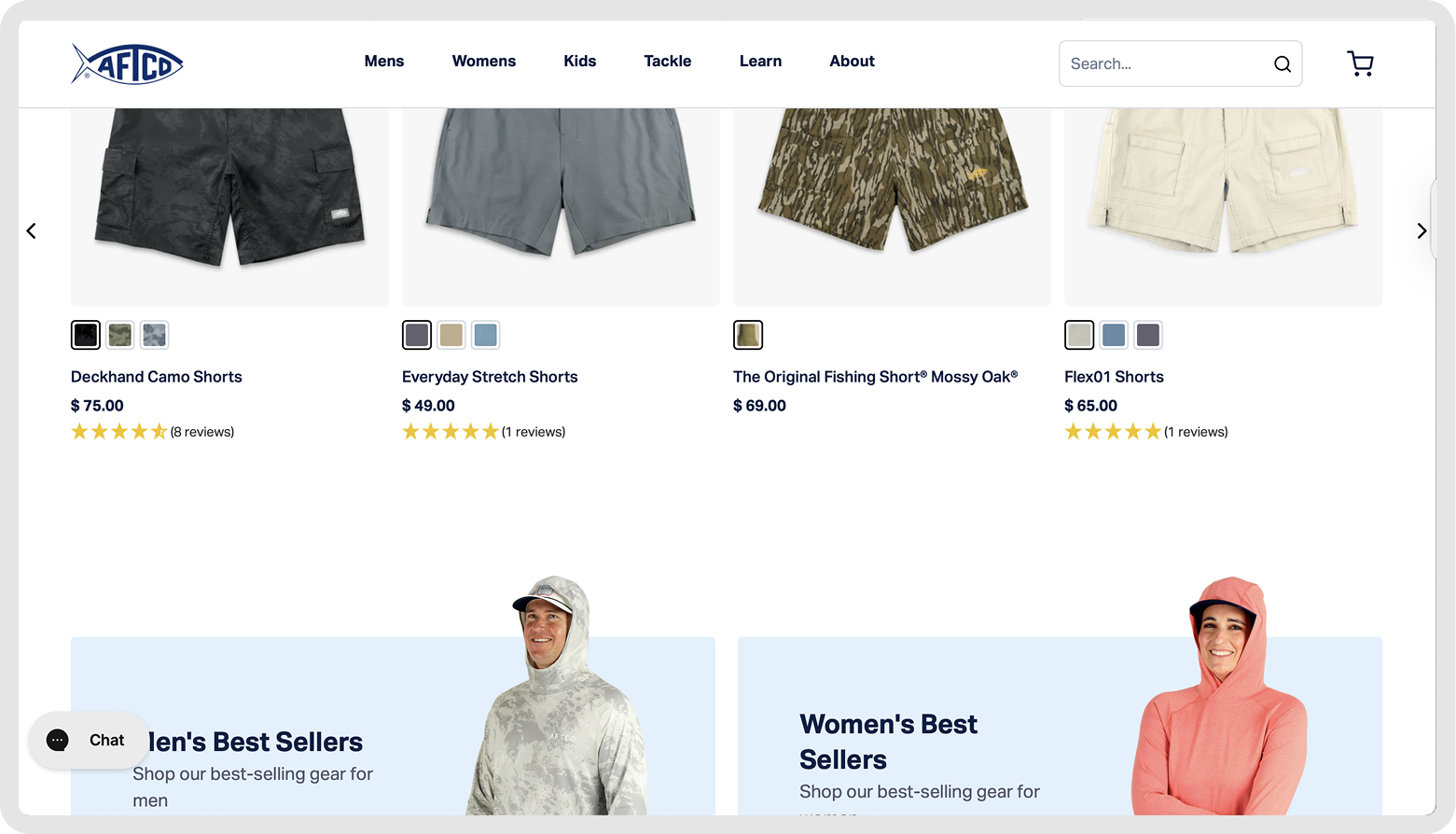

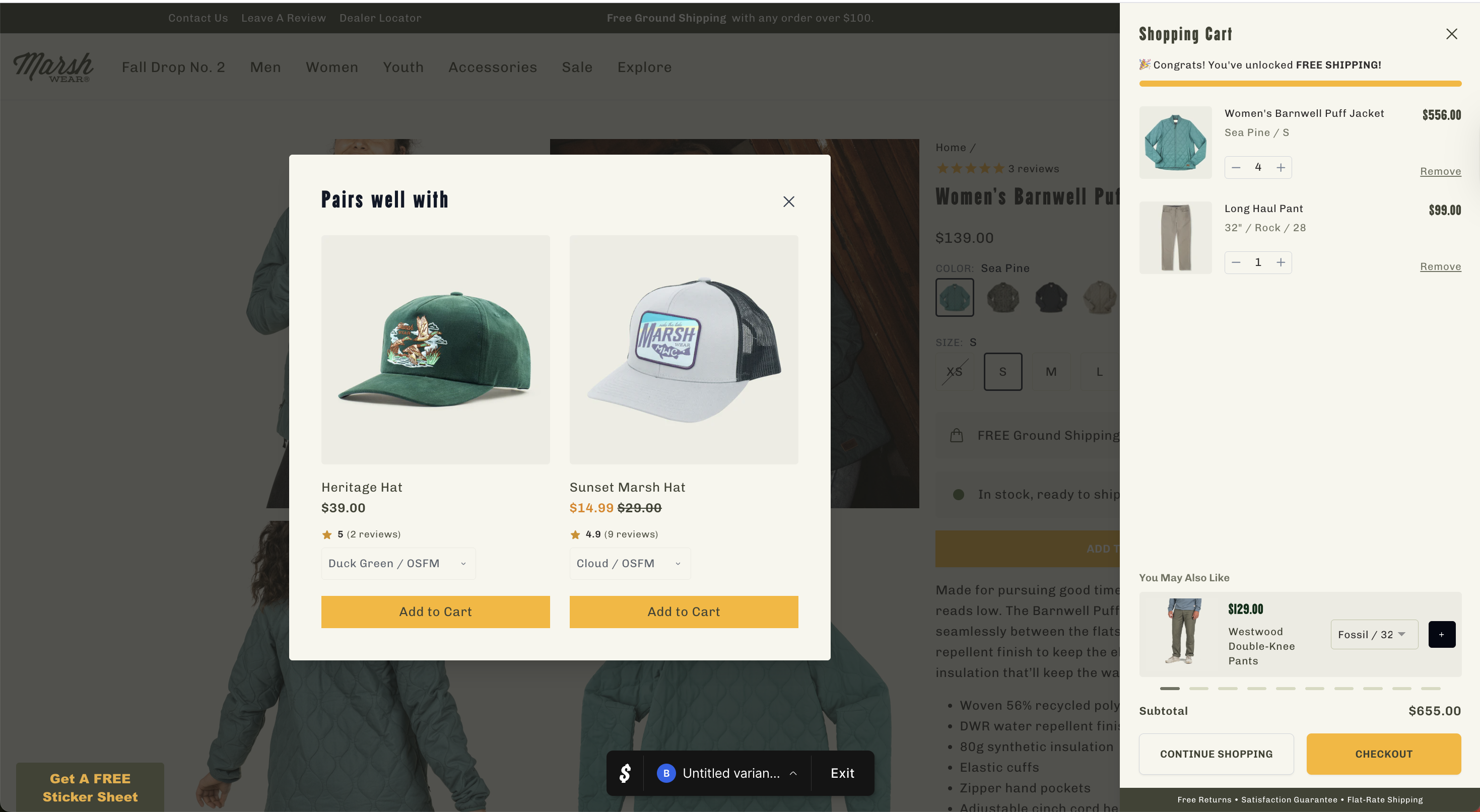

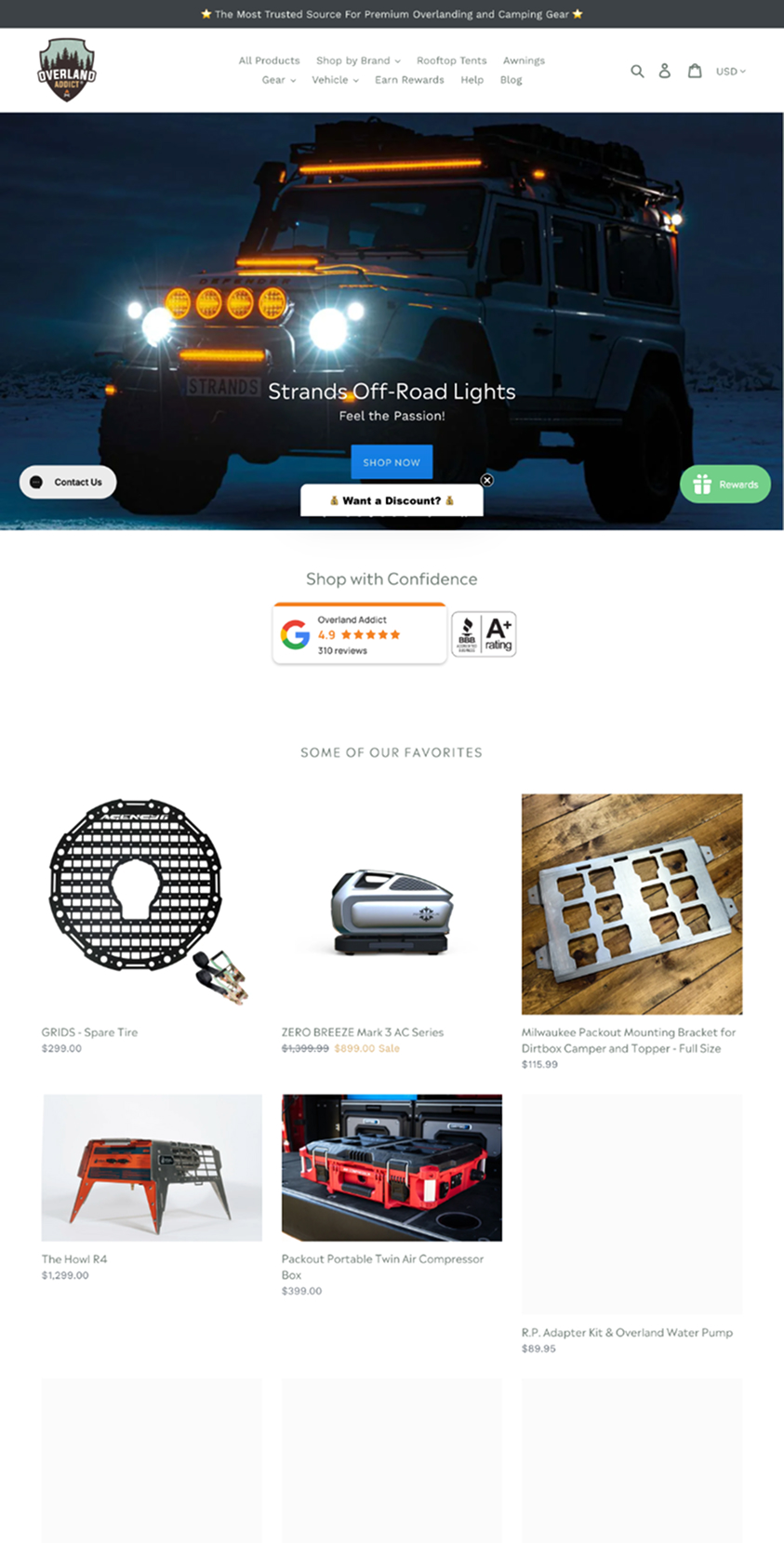

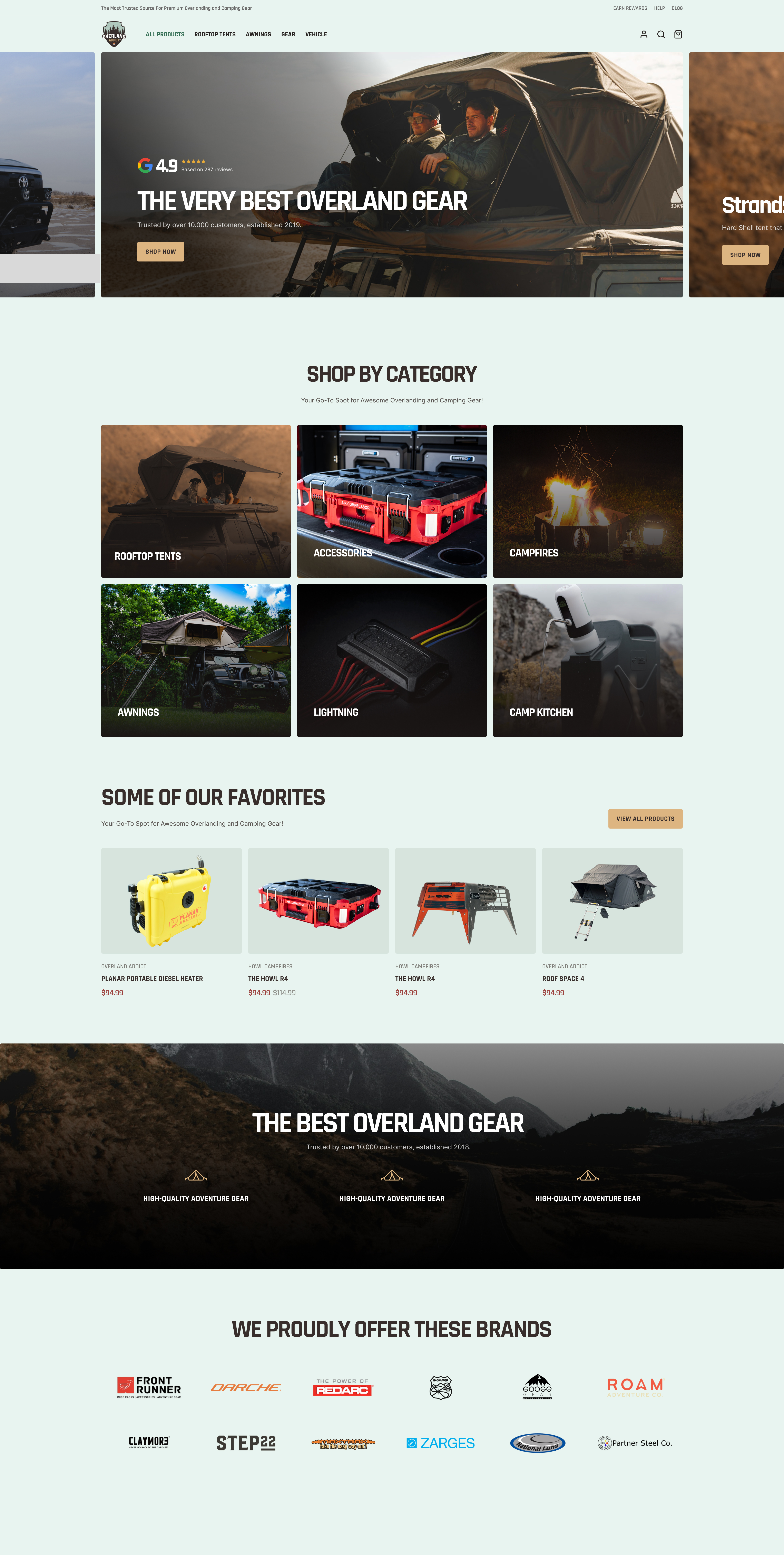

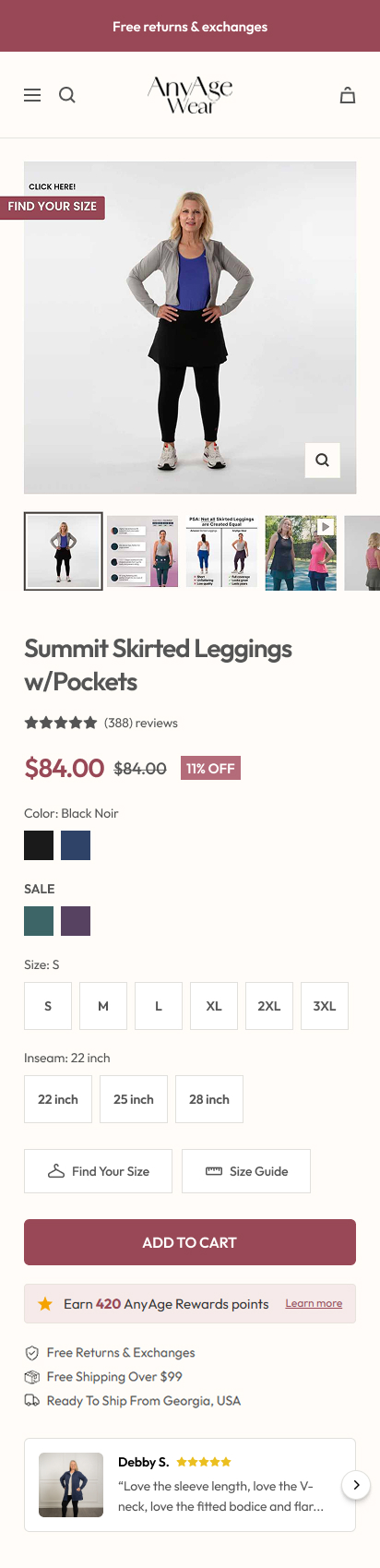

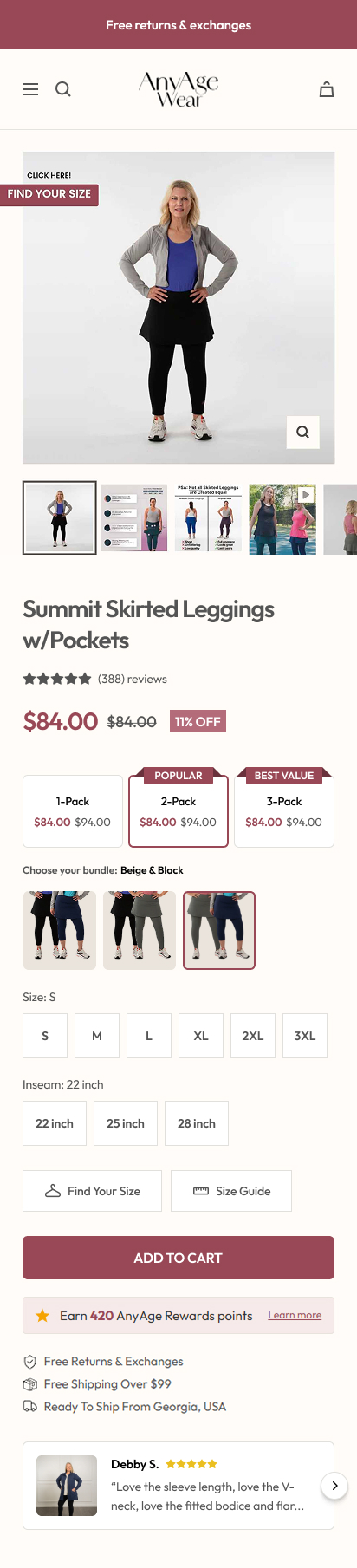

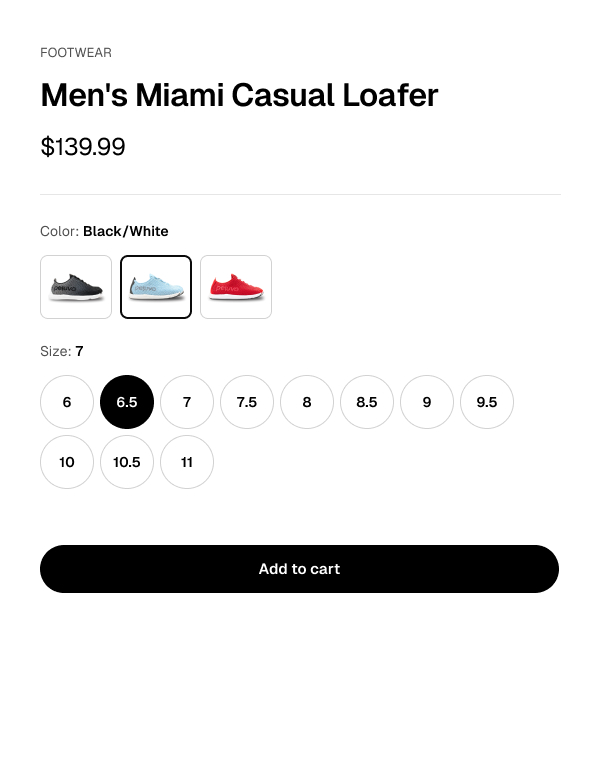

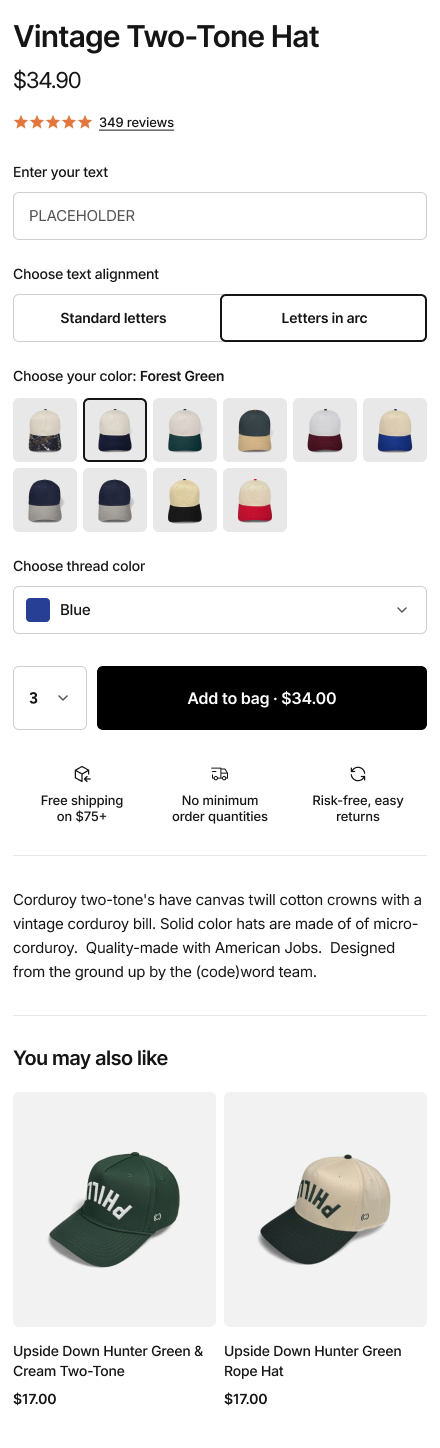

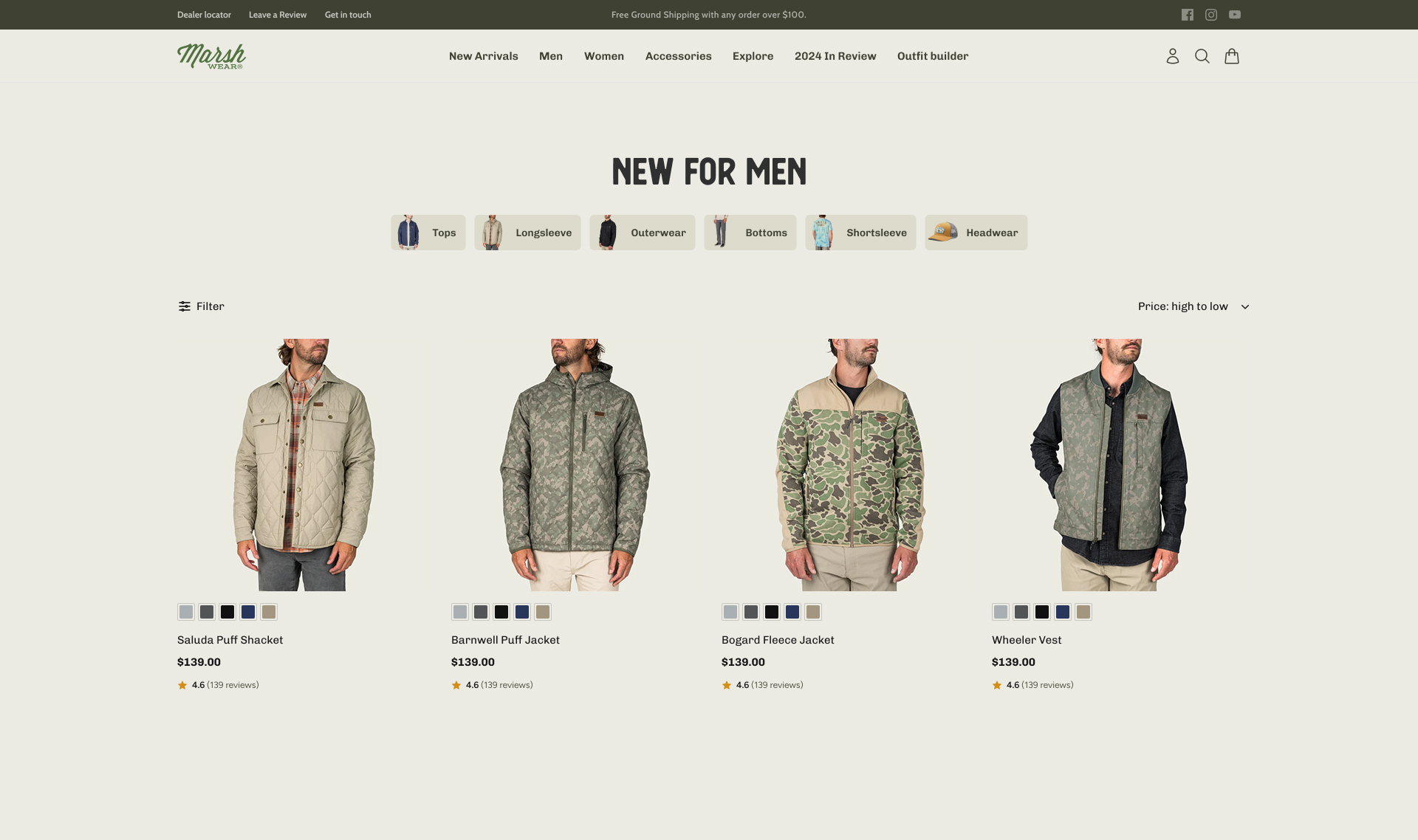

3. Starter Bundle Introduction

Introduced a bundled kit on the PDP pairing two bestsellers at a combined discount. Despite the screenshots, the pricing is handled dynamically and increases the discount to around 15% for the bundled content. Positioned as the default recommended option.

For TooTimid: Couples kits, first-timer starter kits or "date night" bundles would map directly to your two largest customer segments (couples at 35% and first-time explorers at 25%). Bundles reduce decision paralysis and increase AOV in a single move.

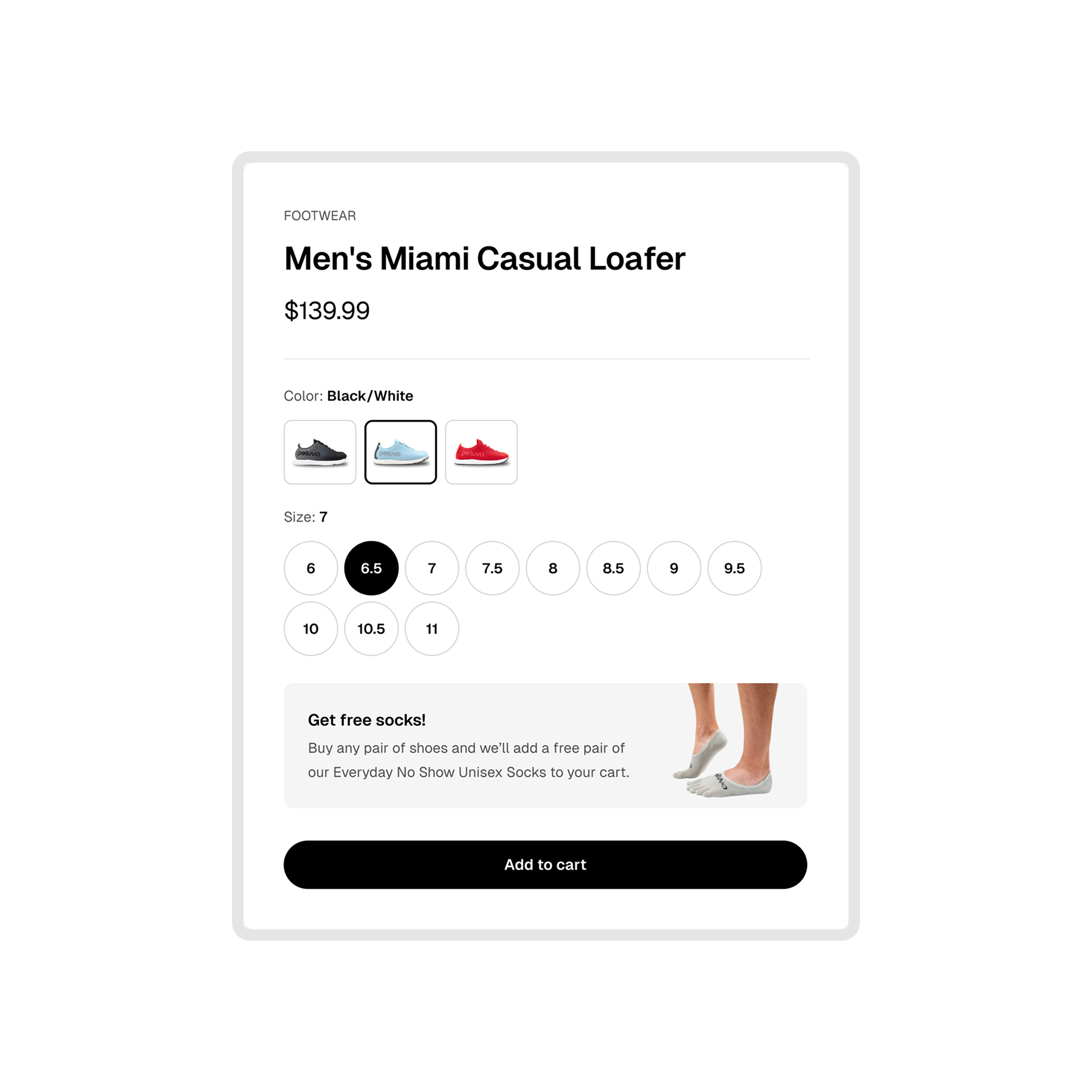

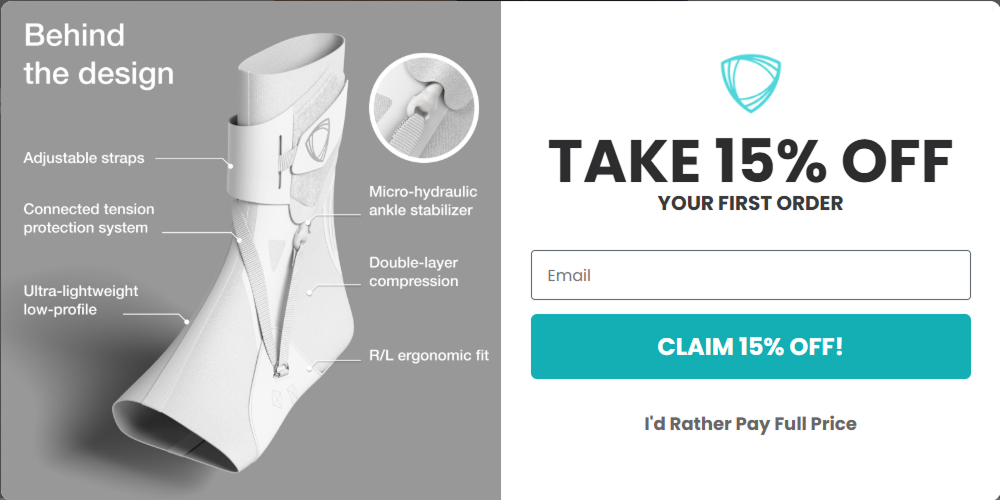

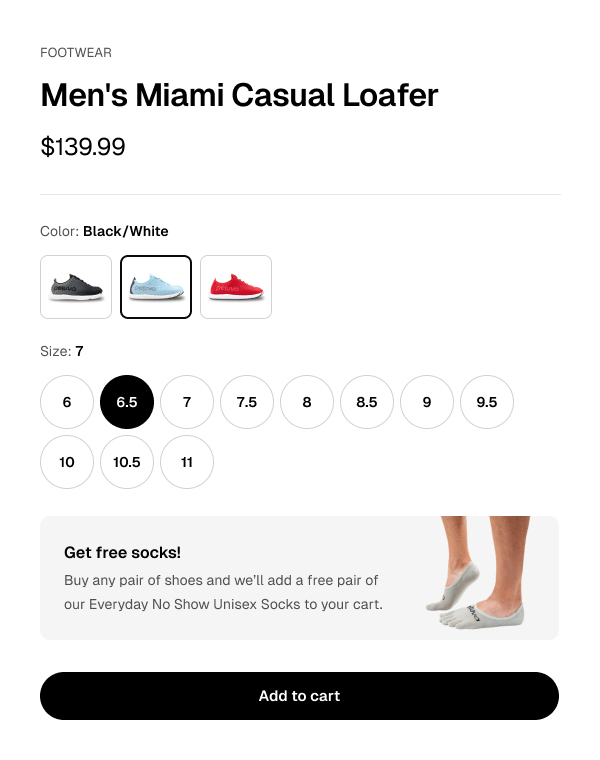

4. Gift With Purchase vs Flat Discount

Replaced a sitewide 15% discount code with a free branded accessory (retail value $25) on orders over $75. The gift with purchase outperformed the discount on conversion, AOV and margin.

For TooTimid: You already include a free gift with every order, but you're also running a permanent 50% sitewide discount code. Testing whether the free gift alone drives comparable results could recover significant margin.

5. Subscribe & Save on Consumables

Added a subscribe & save option on the PDP for consumable products. 10% discount on recurring orders with a toggle between one-time and subscription. Subscription set as the default selection.

For TooTimid: Lubricants, toy cleaners and other consumables are natural candidates for subscription. These products run out and need replenishing. A subscribe & save model generates predictable recurring revenue at zero acquisition cost.

6. Discount Removal on Flagship

Removed the permanent discount code from the hero product and tested it at full price with stronger value messaging. Checkout completions actually increased because removing the discount code field eliminated the "let me go find a code" abandonment loop.

For TooTimid: You're running a permanent "SEXY50" code for 50% off sitewide. Testing what happens when the discount goes away, replaced with value messaging and the free gift offer, could be one of the single highest-impact changes on your store.

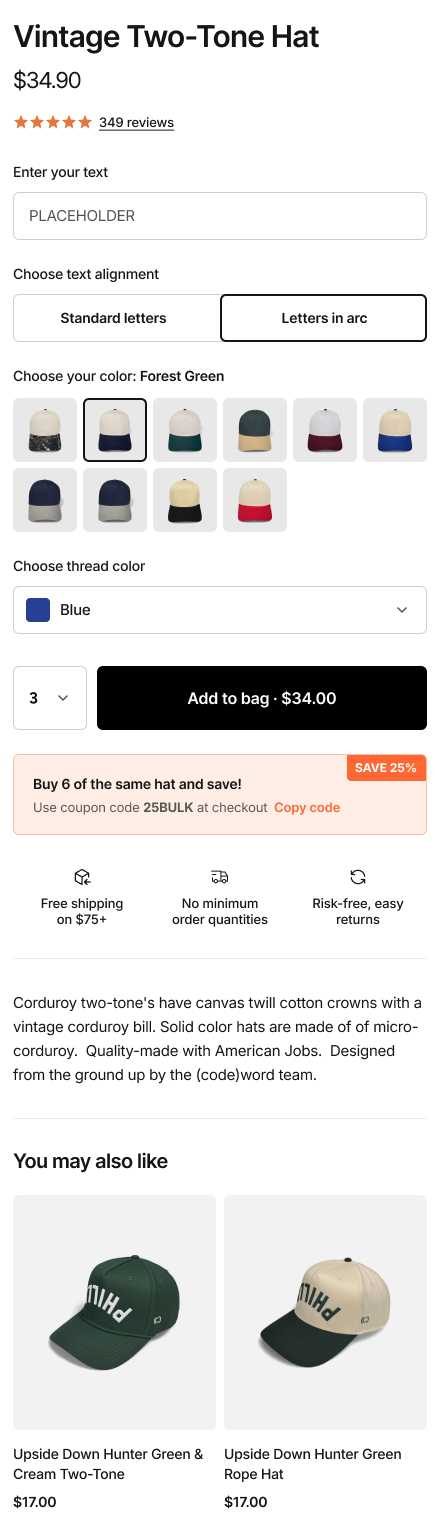

7. Spend-and-Save Threshold Tiers

Replaced a flat 10% discount with tiered spend-and-save thresholds: spend $100 save 10%, spend $150 save 15%, spend $200 save 20%. Most customers aimed for the middle tier, overshooting their original cart value by 25-40%.

For TooTimid: Tiered spend-and-save could replace the blanket 50% code. It gives customers a reason to add more items while maintaining healthier margins at every tier.

8. Post-Purchase One-Click Upsell

Added a one-click upsell page between checkout completion and the thank-you page. Offered complementary products with a "Buy 1 Get 1 40% Off" incentive, purchasable with a single tap. No re-entering payment details. 14% of customers took the offer.

For TooTimid: Post-purchase upsells are especially powerful in your category because the customer has already committed. They've overcome the privacy anxiety and entered payment details. Adding a complementary item at that point is frictionless. We're not sure if you're currently running post-purchase upsells, but this is something we'd like to experiment with, trying different combinations of products and offers.

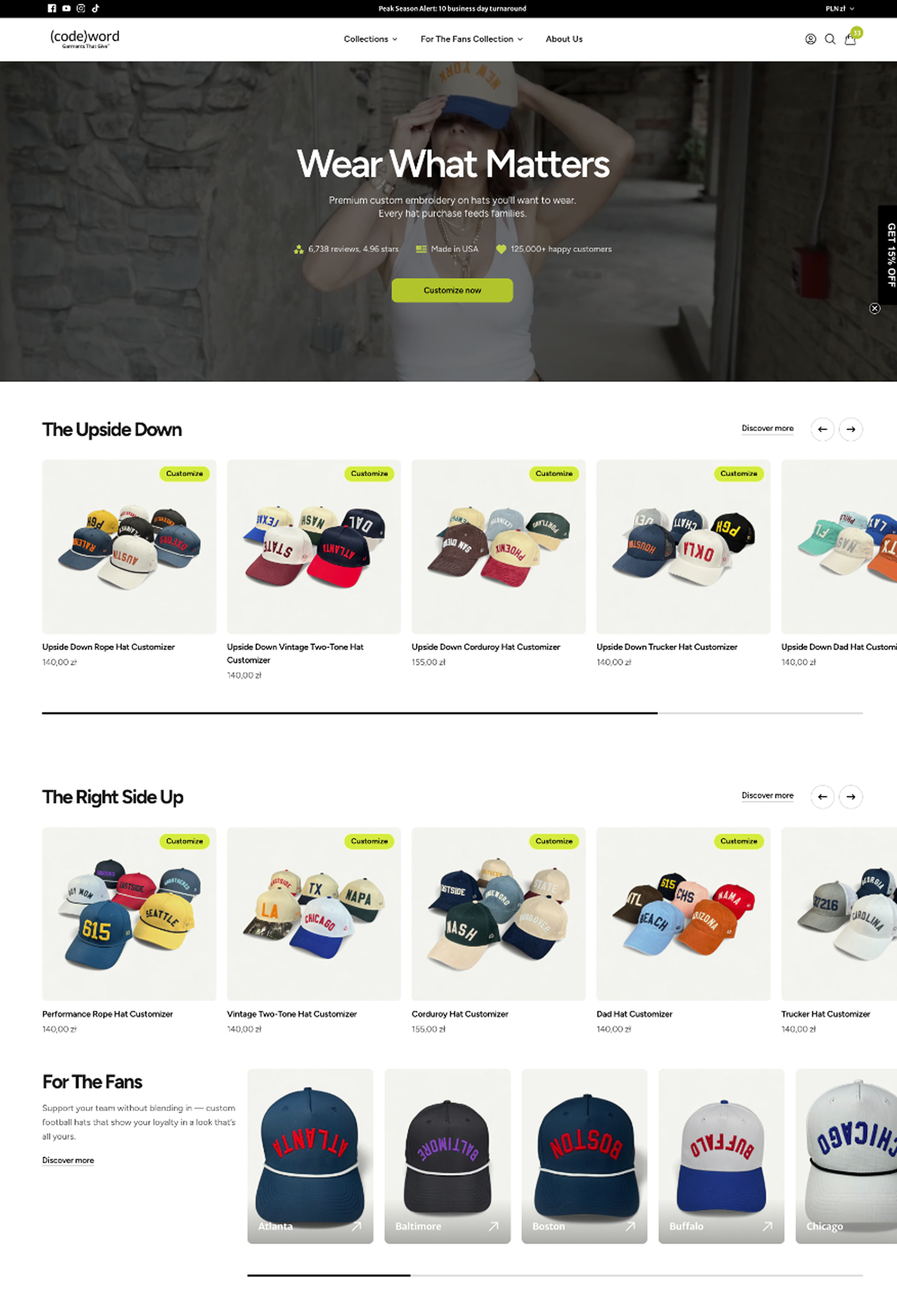

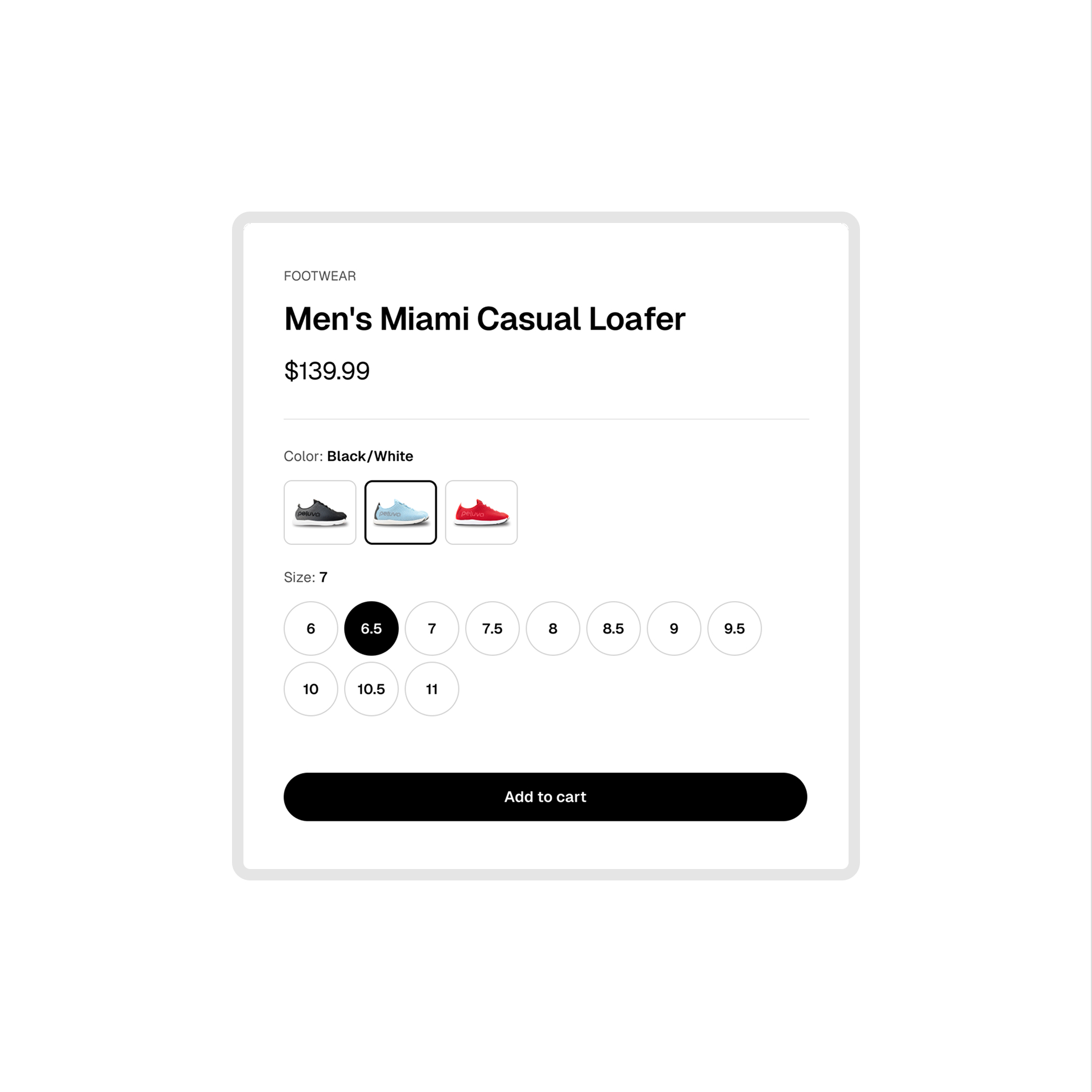

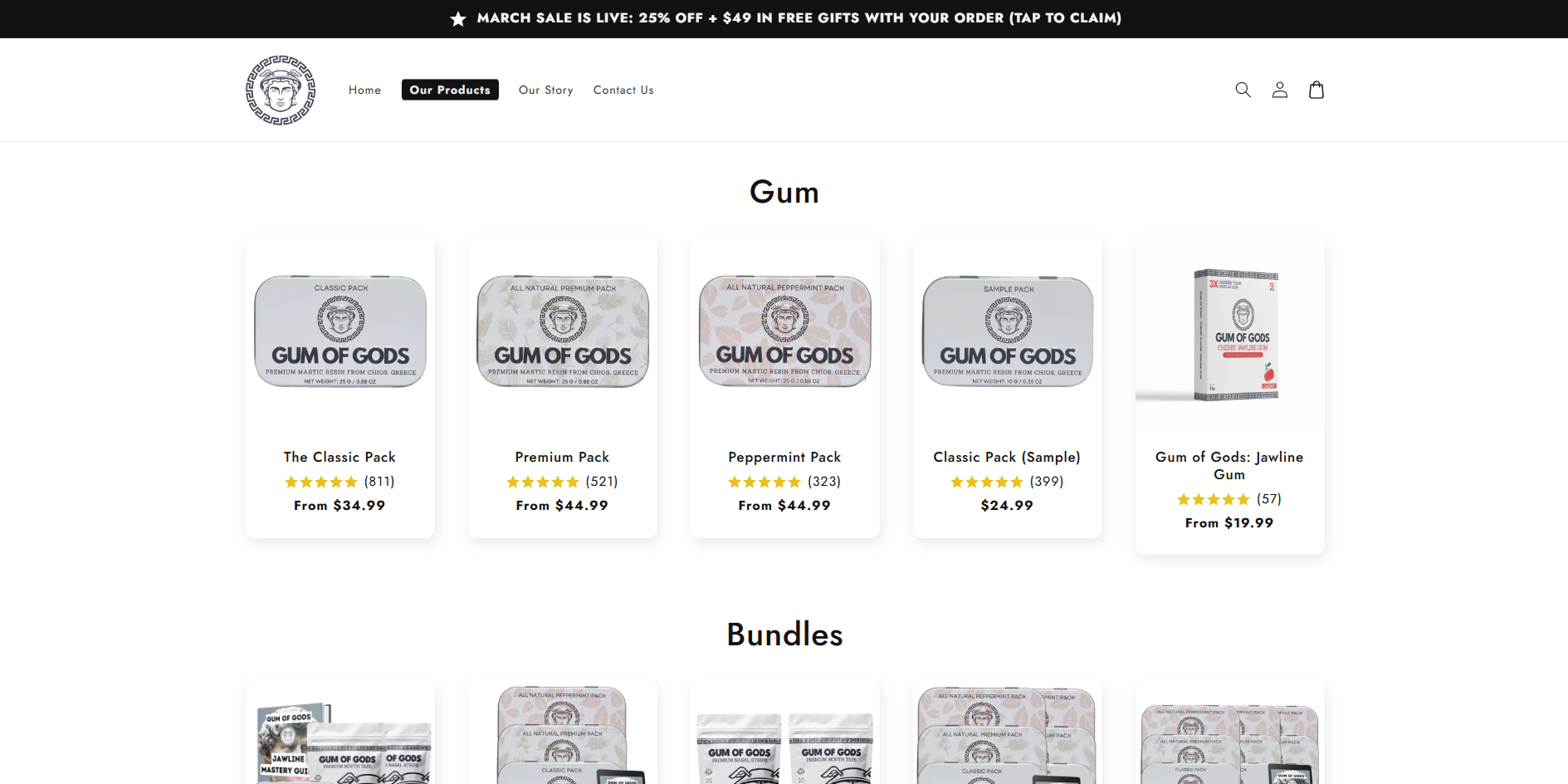

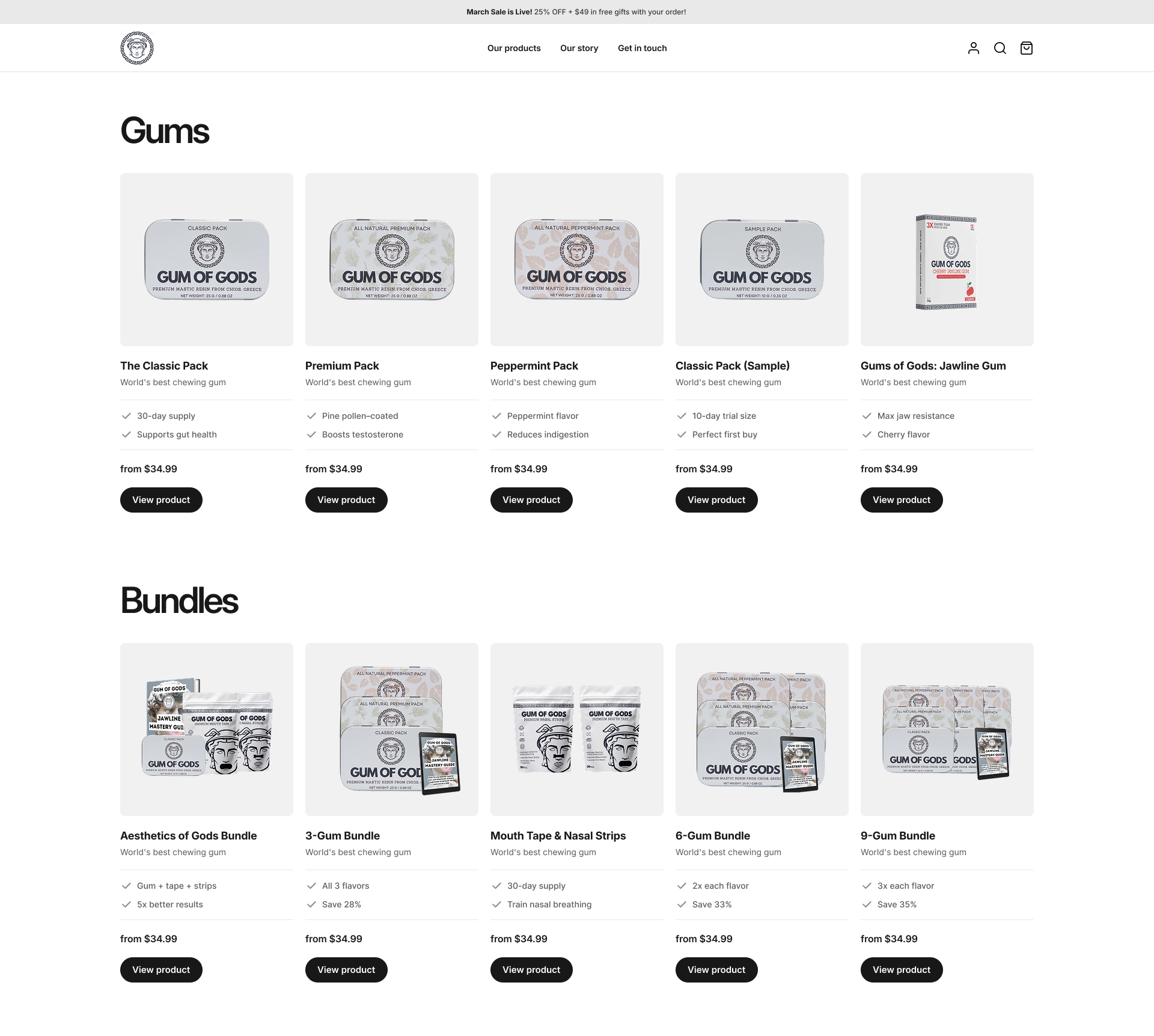

9. Starter Kit for New Customers

Created a $49 "First Timer Kit" with curated entry-level products bundled at a slight discount. Targeted at new visitors from paid ads. Reduced decision paralysis for first-time buyers who didn't know where to start.

For TooTimid: 25% of your customers are first-time explorers. A "New to This? Start Here" kit, curated by your team and priced under $50 with the free gift included, would give first-timers a safe entry point. Starter kit buyers have 3.1x higher 12-month LTV across our client base.

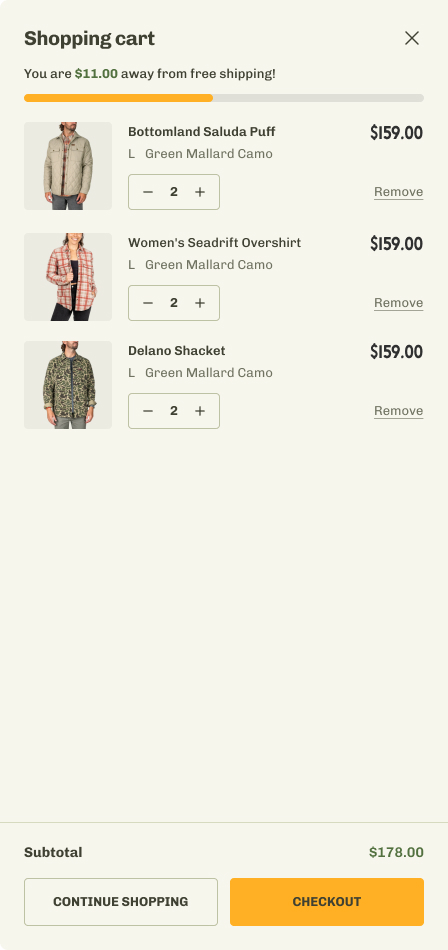

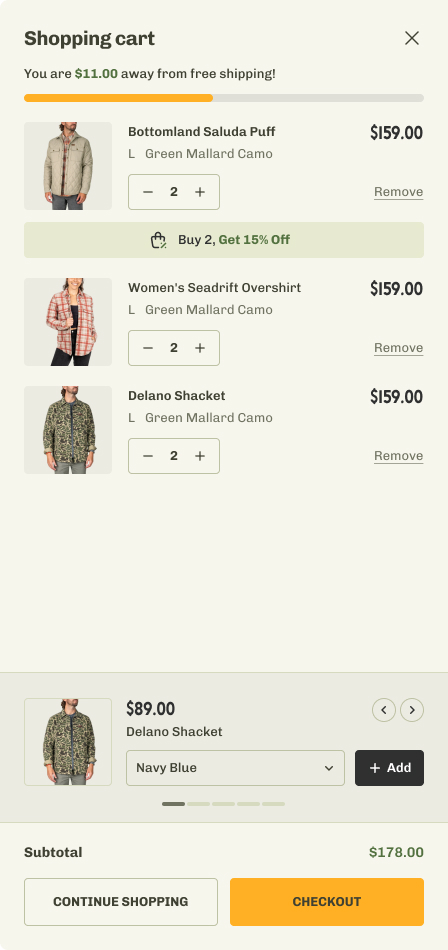

10. Volume Discount Incentive in Cart

Added a "Buy 2, Get 15% Off" incentive badge directly on the product card in the cart, paired with a cross-sell carousel at the bottom. Encouraged customers to add a second item from the same category.

For TooTimid: Volume incentives work well with accessories and consumables where cost of goods is low. Testing a structured offer vs your current 50% flat discount would tell us whether structured offers drive better unit economics.